A note from Raoul Kumar, Director of Platform Development & Success, Qyrus

As this year comes to a close, I want to begin with a simple but heartfelt thank you.

To every tester, developer, and team that chose qAPI—tried it, challenged it, broke it, and helped shape it—this journey would not have been possible without you.

2025 was not just a year of shipping features.

It was a year of listening deeply, questioning assumptions, and doubling down on what truly matters: helping teams test APIs with confidence, clarity, and speed—without friction.

This is our look back at what we built, why we built it, and what the world of real testing taught us along the way.

Here’s to everything we learned in 2025—and to an even stronger 2026 ahead.

— Raoul Kumar Director of Platform Development & Success, Qyrus

It Started With a Problem We Knew Too Well

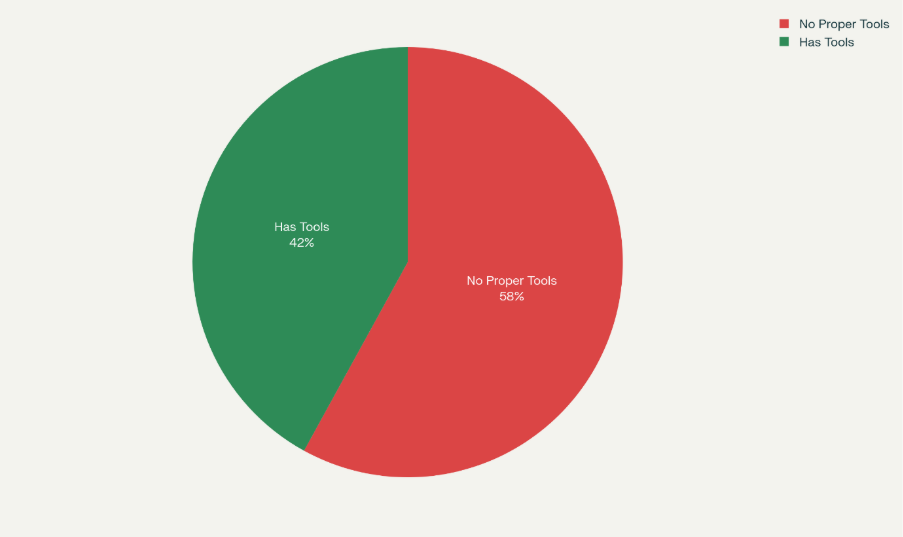

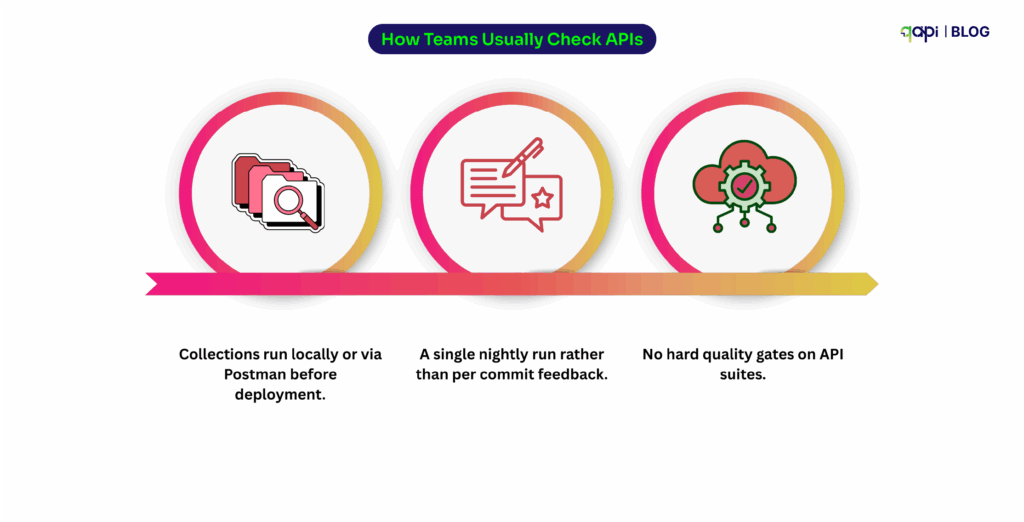

We’ve been testers. We’ve seen the frustration of juggling tools that weren’t designed for QA teams.

We’ve seen how API testing was often treated as an afterthought — complex, code-heavy, and disconnected from real business flows.

So we asked a simple but powerful question: What if API testing actually worked the way testers think?

Not just functional checks. Not just scripts. But end-to-end confidence — from functional to process to performance — all in one place.

That question became qAPI.

A Strong Start: Reimagining qAPI from the Inside Out

We started the year by asking ourselves a hard question:

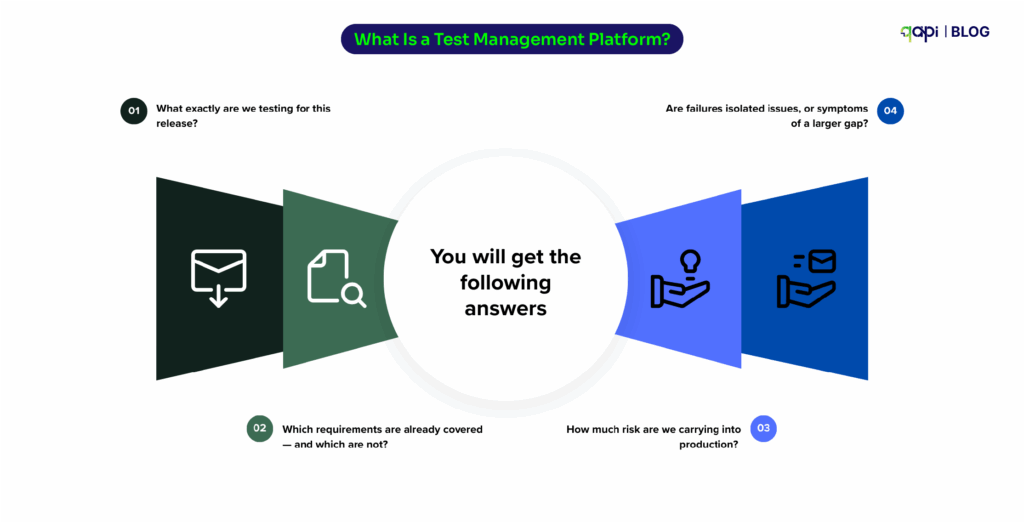

Is qAPI truly aligned with how teams test APIs today—or how they need to test them tomorrow?

That insight led directly to the qAPI rebrand and UI refresh. We had decided then that the goal wasn’t just to improve UI/UX. It was go a step ahead and make an easy to use and seamless.

To answer that we began with one of the largest internal, cross-functional gatherings we’ve ever had. Engineering, product, sales, marketing, and customer teams came together with one shared goal: to deeply understand how qAPI fits into real testing workflows — and how it could do even more.

It was a session to show how the new platform works end-to-end, how no-code automation can remove barriers for testers, how developers can move faster without sacrificing quality, and how organizations can eliminate manual overhead without losing control.

We answered several questions, gave a live demo, and helped our teams understand and get used to the qAPI application. With this, we got the push we needed as the word spread internally and to other folks in the testing space.

It worked in our favour because-

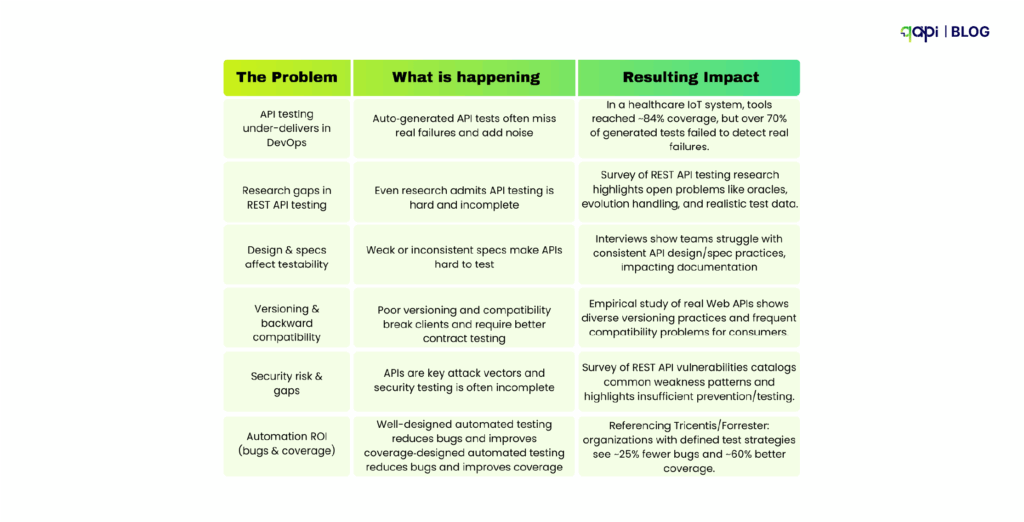

We Listened Closely: What the Market Needs

As teams globally started running their API tests with qAPI, we saw a different kind of problem that they faced.

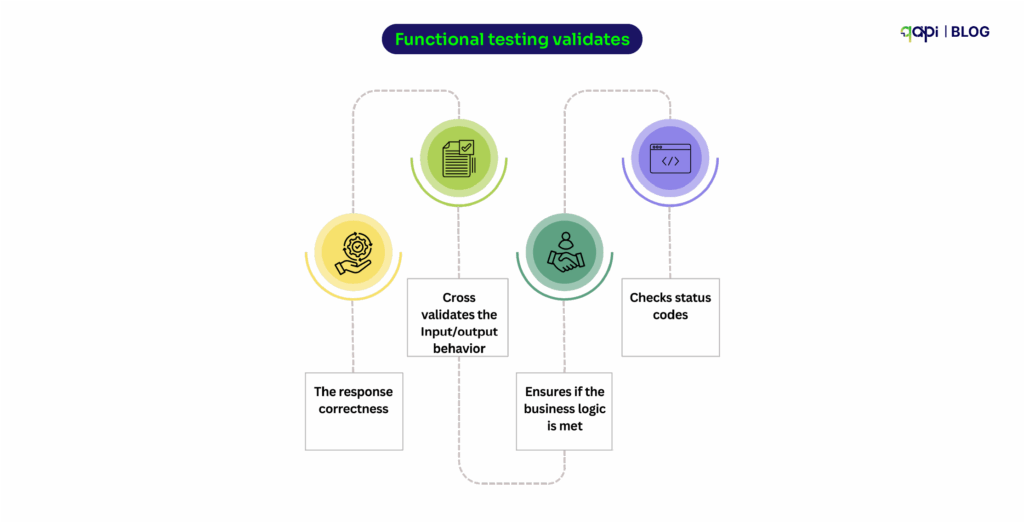

Tests existed, but teams didn’t always trust them. Failures were sometimes caused by timing issues, shared environments, unstable data, or inconsistent API responses rather than real regressions.

This created a problematic situation for teams, as they either ignored failures or spent too much time trying to determine whether a test was lying. At this stage, we realized we needed to solve this so teams could gain predictability and structure.

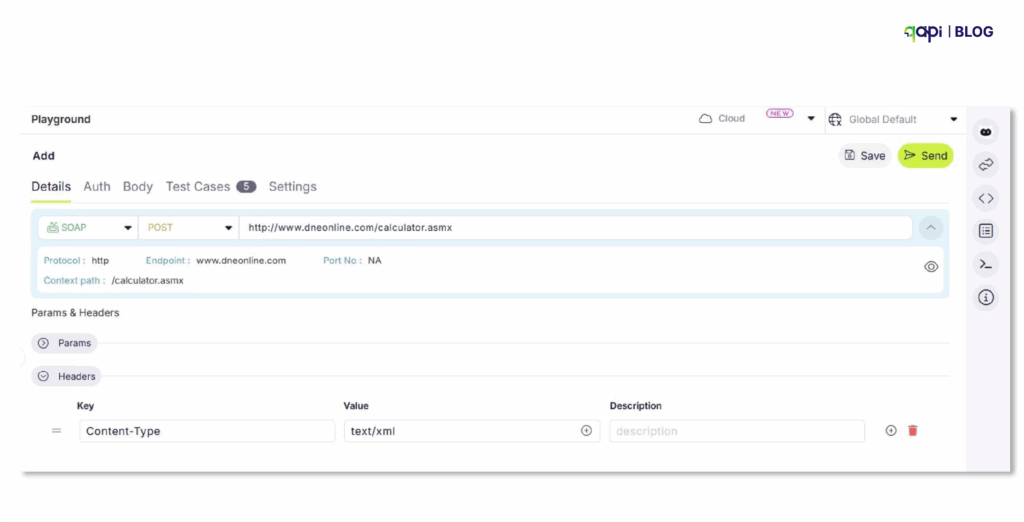

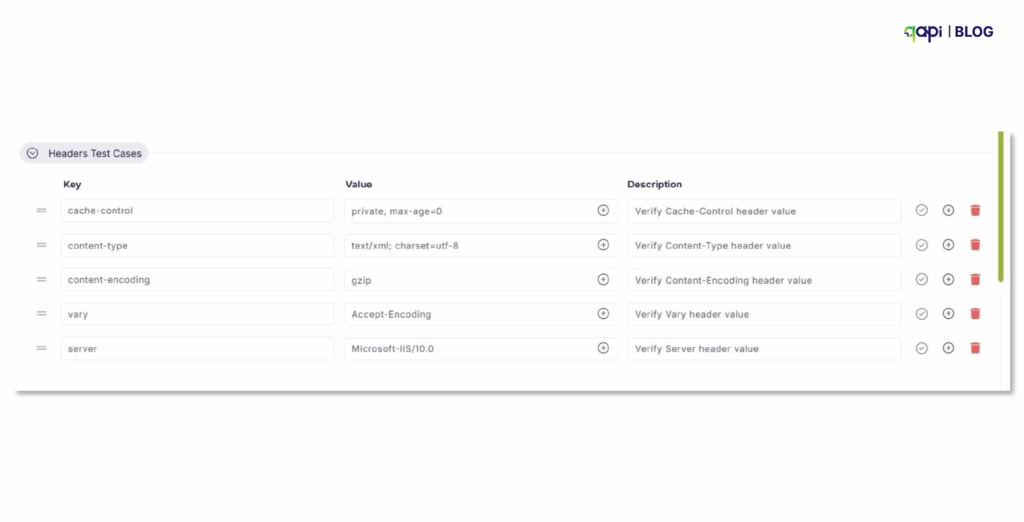

This is where our development team shifted focus toward improving how teams manage environments, validate responses, and maintain consistency across APIs. So that APIs can have clear response structures, better handling of test data, and cleaner separation between environments, which helped reduce noise and make failures meaningful again.

Read more about Shared workspaces.

Around this time, we also released the Beautify feature in qAPI. It may seem small, but it addressed a real pain: the code developers write is mostly messy/hard to read. Whether you’re testing APIs or preparing to deploy, beautify ensures your code is always clean and structured.

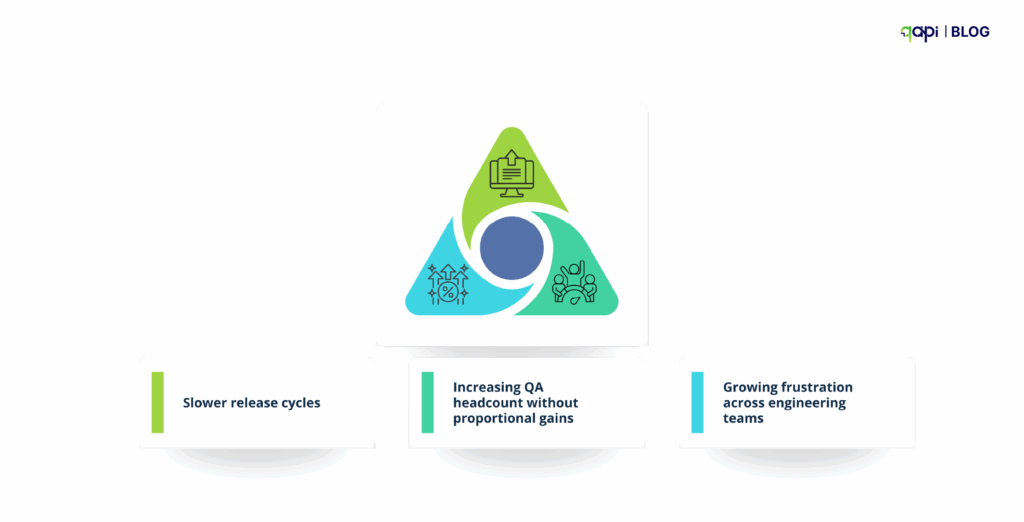

Reliability, Scale, and the Pressure to Move Faster

In the next few months we saw a growing concern around reliability, users asking questions like: “This API works but how to check it’s limitations?” “Will the API be stable and work under real traffic?”

When we interacted with testers and other users, they told us

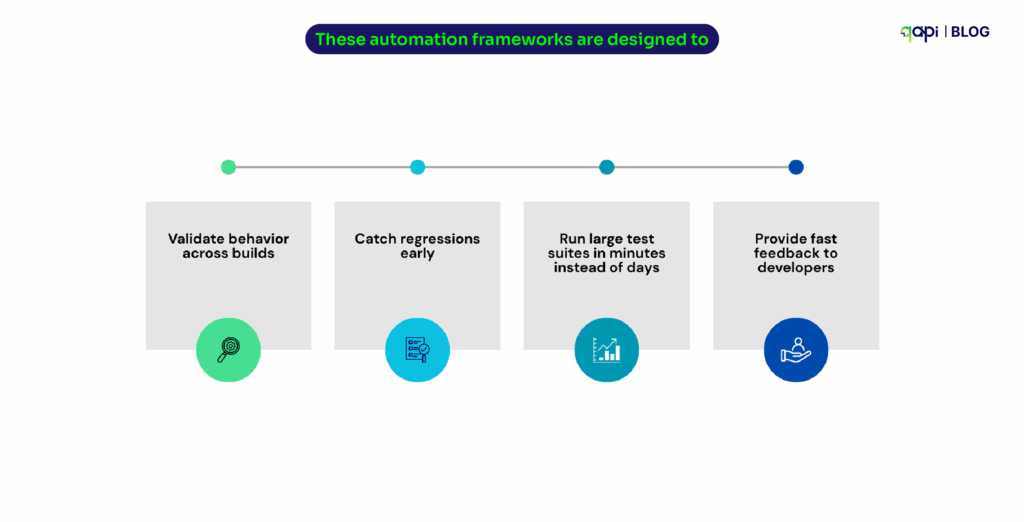

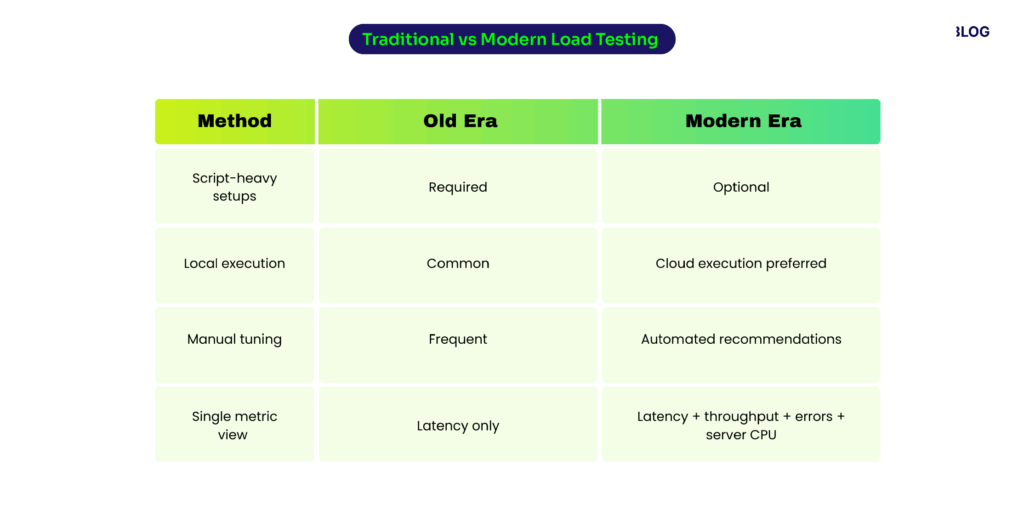

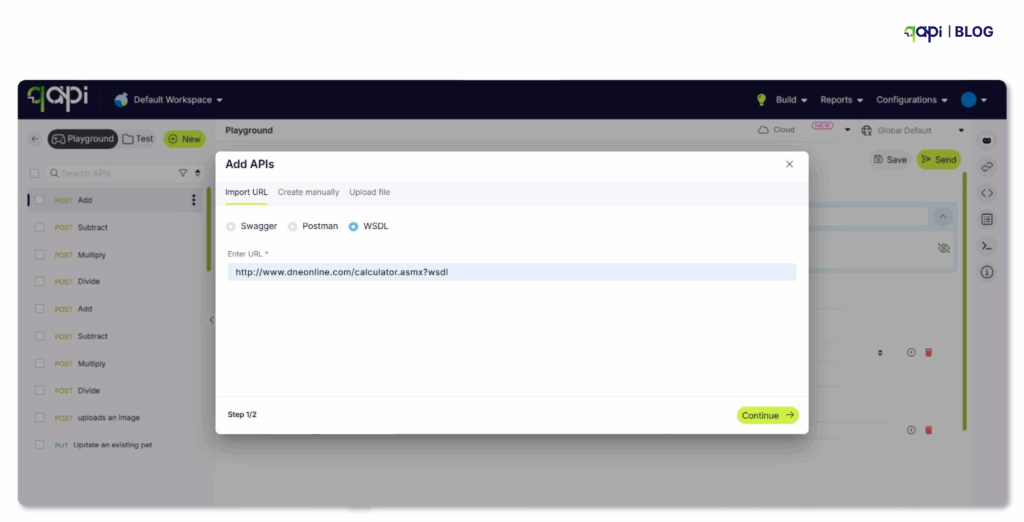

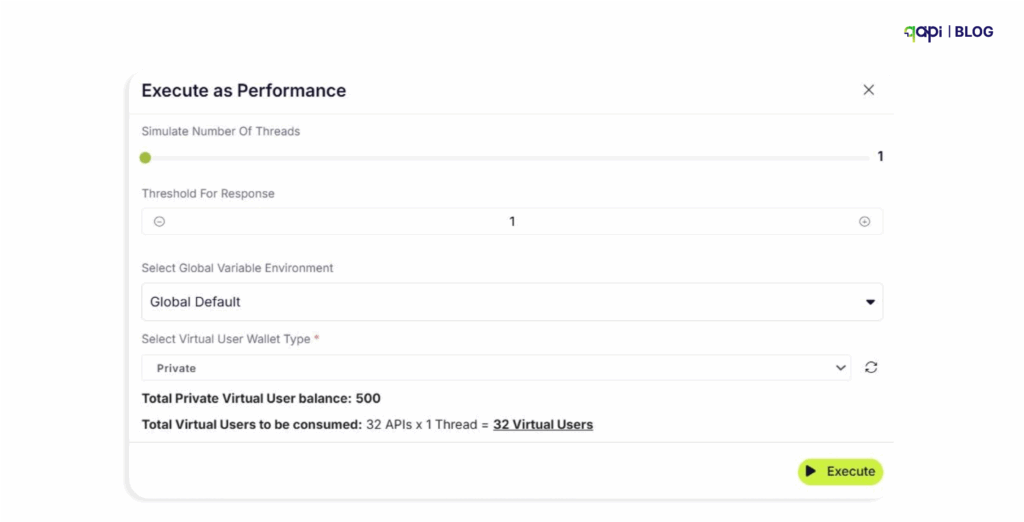

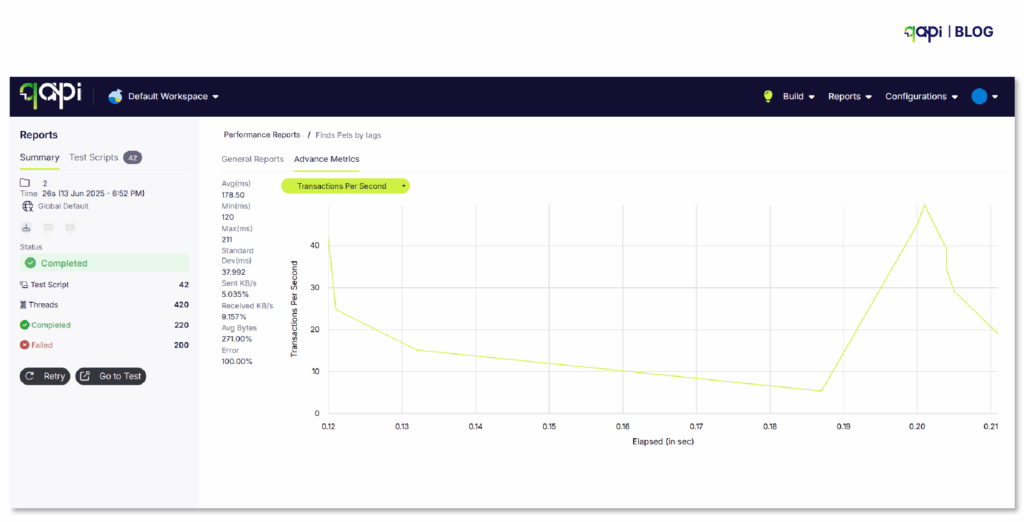

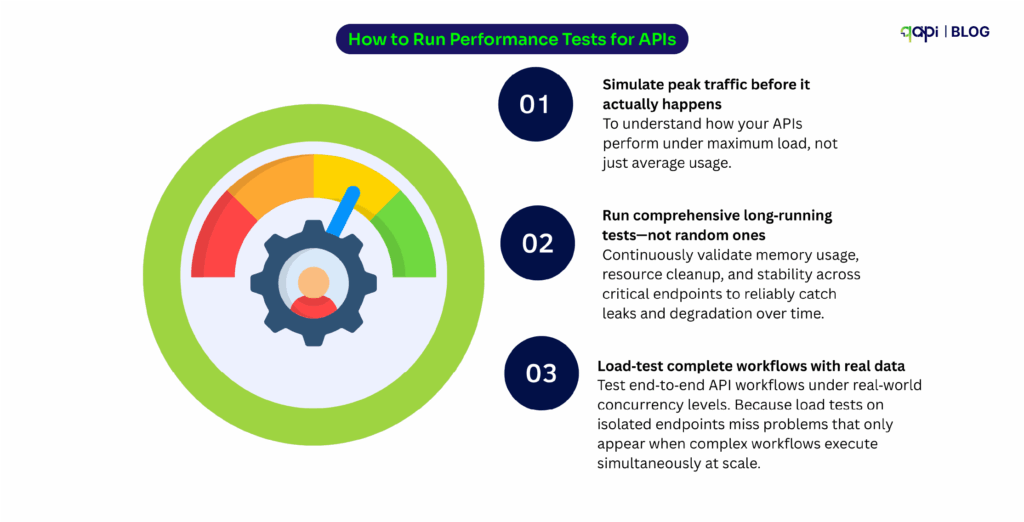

That they wanted a way to flood the service with multiple requests and test it to identify any lapse in performance under load. But because current load testing methods felt disconnected—heavy tools, separate workflows, and long setup times. Our teams decided to solve this by creating a pay-as-you-go load testing feature update Virtual User Balance (VUB).

The goal was never to replace performance engineering. It was to close the gap between correctness and scale—so teams could catch performance issues before they reached production.

We gave away free 500 virtual users no questions asked just to get the ball rolling!

Next, we also hosted a webinar to address the misconceptions holding teams back. In our session, “Debunking the Myths of API Testing,” we removed the confusion surrounding API quality—challenging the persistent ideas that it is too complex, requires heavy coding, or is secondary to UI testing. By breaking down these barriers, we demonstrated how qAPI , an end-to-end API testing tool can make API testing accessible and essential for early bug detection, empowering teams to shift left with confidence.

Watch the Webinar Here

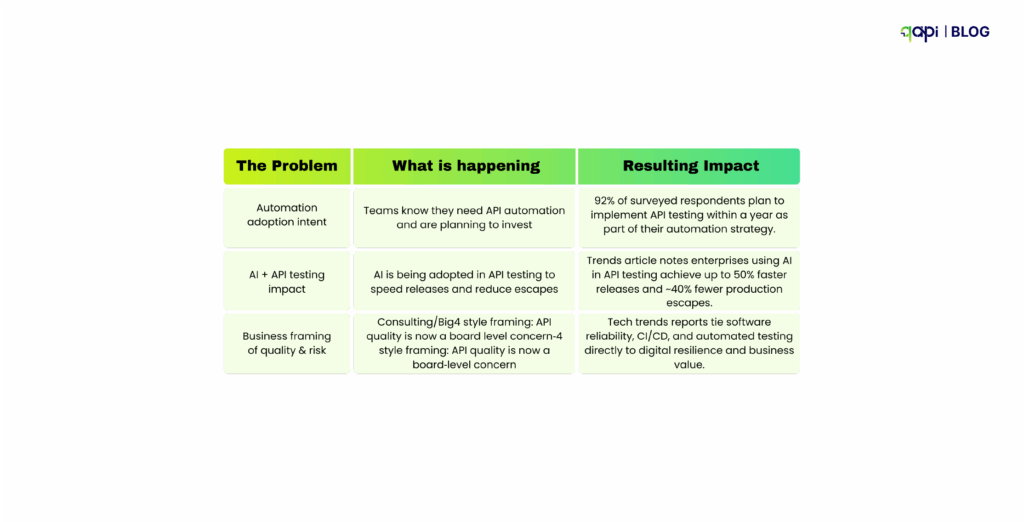

APIs Moved to the Center Stage

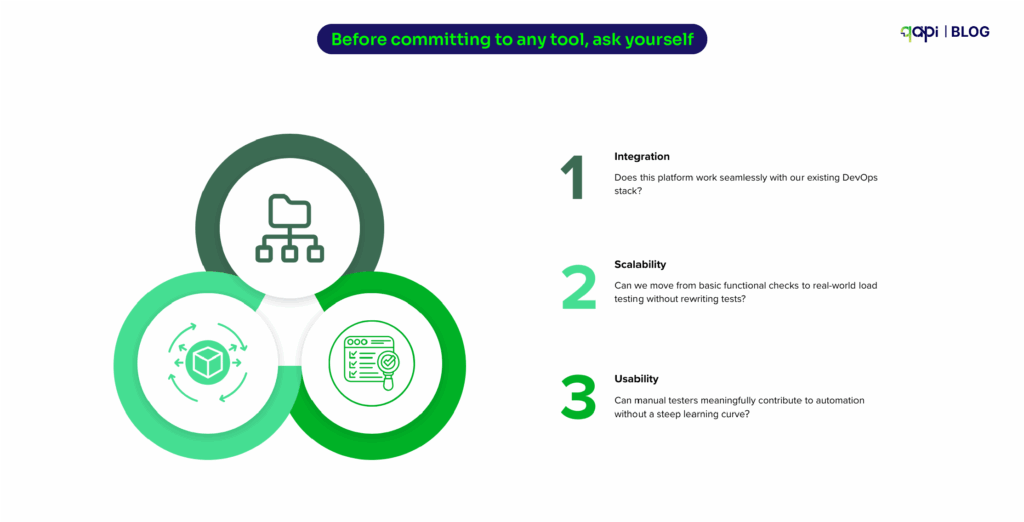

At API World (September 3–5), APIdays London (September 22–25), StarWest (September 23–25), and APIdays India (October 8–9) We had some interesting conversations with engineering leaders who described their problems.

We used those problem statements to demonstrate the power of qAPI. By showing attendees how they can execute end-to-end tests—seamlessly transitioning from functional, process to performance load within a single interface—we proved that you don’t need a complex, disjointed toolchain to build scalable APIs.

A snippet from API world https://www.youtube.com/watch?v=ZVIa7kDMF9I

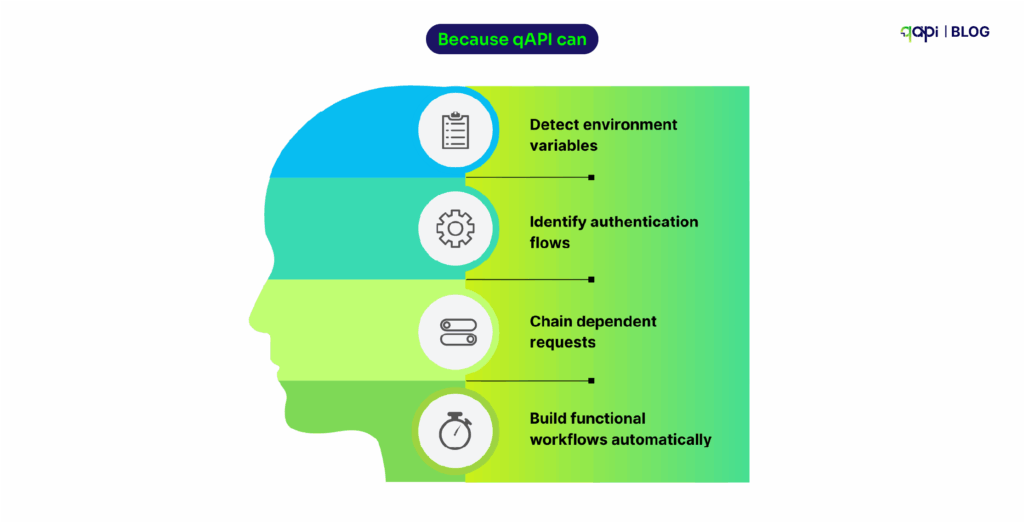

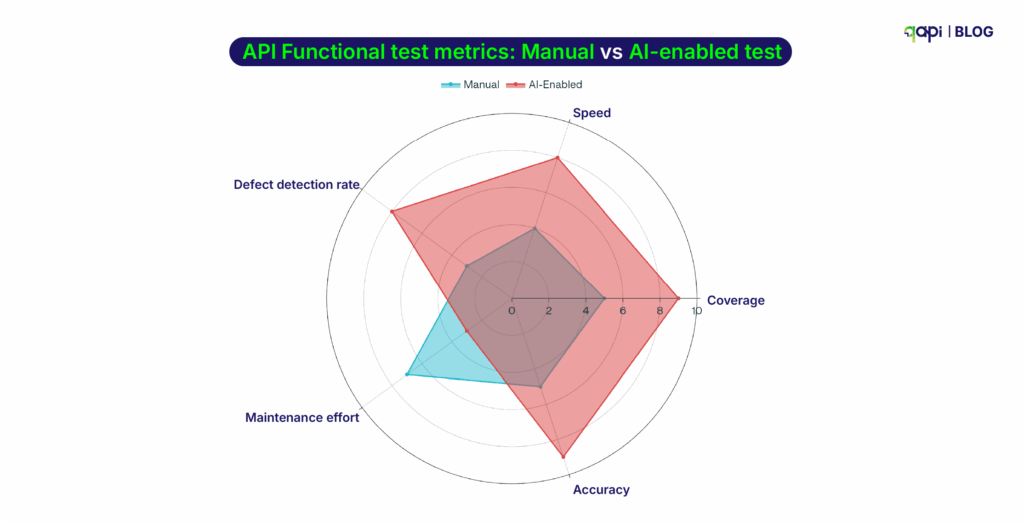

Raoul Kumar took the stage twice—first with a hands-on workshop on using agentic orchestration to test APIs, and later with a keynote that explored the future of API testing through a no-code, cloud-first lens.

At APIdays India, Ameet Deshpande gave a talk that really resonated with the crowd. He explained why old ways of testing just can’t keep up with today’s complex, AI-powered world. He stated that we need smarter, AI-led tools to manage the workload. The next day, Ameet hosted a workshop along with Punit Gupta, where attendees saw qAPI in action. They learned how using AI “agents” to run tests can help them check much more of their software and ship it faster.

These conversations directly influenced our push toward shared workspaces in qAPI, enabling teams to collaborate, manage environments, and scale testing together — rather than working in disconnected groups.

With this update teams can now easily view and make changes in dedicated environments and the other involved teammates can directly access the updated APIs without having to check with each other and get the updated dataset.

Developers at the Center

APIdays India, Bengaluru – Oct 8–9

India’s scale demands a different approach to quality. Through talks and hands-on workshops, Qyrus demonstrated how agentic orchestration can dramatically expand API test coverage without slowing delivery.

Our team spent two energizing days connecting with developers, QA leaders, and digital architects who are building API-first systems for one of the world’s fastest-growing digital economies. Ameet Deshpande’s talk on why API testing needs to change struck a strong chord, highlighting how traditional QA struggles in AI-driven, highly connected ecosystems, and why agentic orchestration and multimodal testing are becoming essential.

That thinking came to life during a packed, hands-on workshop with Ameet and Punit Gupta, where attendees saw firsthand how directing AI agents can dramatically expand API test coverage and accelerate delivery.

HackCBS 8.0, New Delhi – Nov 8–9

We partnered with India’s largest student-run hackathon reminded us why accessibility matters. Students embraced API testing as an enabler — validating ideas faster and building with confidence from day one.

Being surrounded by thousands of passionate student builders, innovators, and problem-solvers was a powerful reminder of why quality and experimentation matter from day one.

Through hands-on workshops led by Punit Gupta and engaging conversations at our booth, we introduced qAPI as a practical, developer-friendly way to test and validate prototypes faster without slowing creativity. What stood out most was the curiosity and confidence with which students approached API testing, asking thoughtful questions and immediately applying what they learned to their ideas.

Before we ended the year, we added a few more updates!

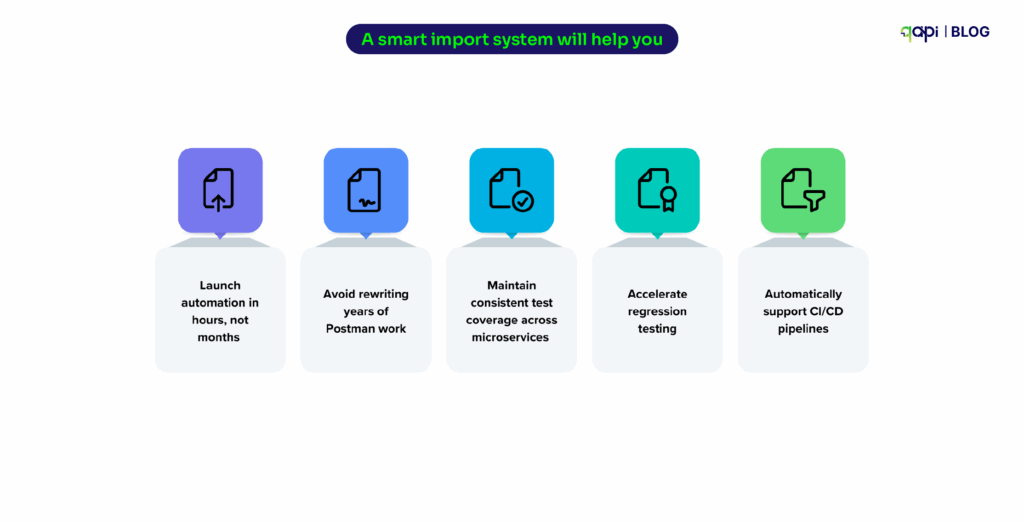

Import via cURL

Developers already use cURL to debug APIs. Turning that into an automated test used to mean manual rework. With Import via cURL, a working command becomes a test in seconds—closing the gap between manual checks and automation.

Expanded Code Snippets

By adding C# (HttpClient) and cURL snippets, testers and developers can now share executable logic—not screenshots or assumptions. Testing feeds development instead of running parallel to it.

AI Summaries

As workflows grow complex, understanding why a test exists becomes harder than running it. AI Summaries make tests readable, explainable, and safer to maintain—especially during onboarding and incident reviews.

As we step back and look at everything that unfolded over the year—the product decisions we made, the conversations we had across global stages, and the feedback we heard directly from developers and testers—a clear pattern emerges. Each update solved the problems we’d seen repeatedly — in conversations, workshops, and real customer workflows.

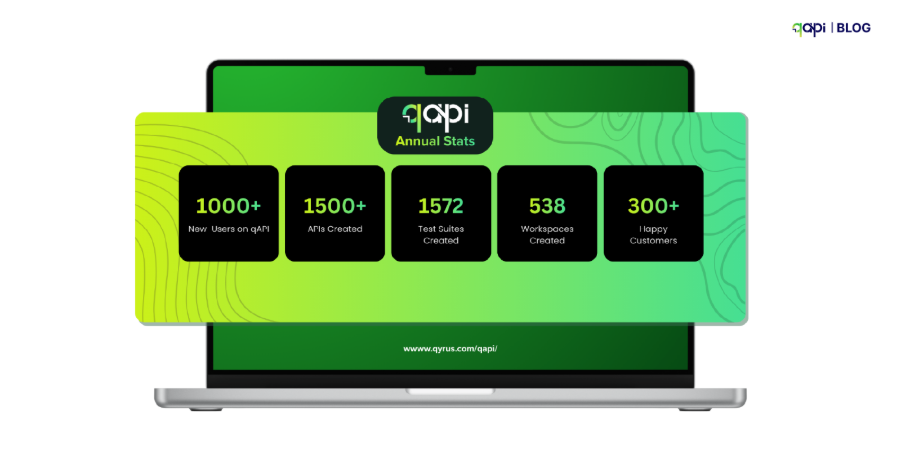

Over the past year, qAPI has grown from an API testing tool into a platform teams rely on every day—across development, QA, and delivery—to move faster with confidence. What started as a way to simplify API testing has evolved into something much bigger: a system that helps teams design better APIs, test earlier, collaborate more effectively, and trust their releases in increasingly complex environments.

As we look ahead, the ambition only grows. The coming year will bring deeper intelligence, tighter workflows, and even more ways for developers and testers to work in sync—without friction, without guesswork, and without compromising quality.

Thank you for building with us, challenging us, and shaping qAPI along the way. There’s a lot more coming—and we’re just getting started.