The Context

Passing individual API tests doesn’t mean your workflows work. This post covers 5 practical ways to get the most out of API workflow testing — from chaining calls correctly to making your tests survive real-world change Discover how qAPI streamlines these complex processes, making execution significantly less painful.

Ask any QA engineer to name their primary frustration, and you’ll likely hear a variation of the same answer:

“My tests pass in isolation but the workflow breaks in staging.”

It shows up constantly across communities like r/QualityAssurance and r/softwaretesting.

An engineer runs their suite, the dashboard stays green, and confidence is high—until the push to staging. Suddenly, a critical multi-step flow collapses.

The problem is almost never a broken endpoint; It’s always a broken sequence. The order of calls is incorrect. A token from step one wasn’t passed to step three. Or a status change in one service wasn’t reflected in another quickly enough to satisfy a dependency. Individual endpoint tests are just that — individual. They tell you each piece works in isolation. They say almost nothing about whether those pieces work together, in the right order, under realistic conditions.

That’s what API workflow testing is for. And most teams either aren’t doing it, or they’re doing it in a way that breaks the moment the API changes.

Here are 5 ways to actually get it right — and how qAPI helps you get there without rewriting everything from scratch every sprint.

Stop Testing Endpoints. Start Testing Journeys.

The most common mistake in API testing isn’t technical — it’s conceptual. Teams build a test for each endpoint and call it done. POST /users passes. GET /orders passes. POST /payments passes. Ticket closed.

But real user flows don’t work like that. A user registers, gets a verification email, confirms their account, logs in, browses products, adds to cart, and checks out. Each one of those actions is an API call. Each one depends on the output of the one before it. The ID returned by POST /users becomes the input to GET /users/{id}. The order ID from POST /orders has to be passed to POST /payments. Break the chain at any link and the whole workflow silently fails.

The fix: Map your user journeys before you write a single test. For every critical business flow in your product — signup, purchase, booking, whatever your core workflows are — draw out the sequence of API calls involved. Then write tests for the sequence, not just the endpoints.

In qAPI, you can build these workflow chains visually, linking calls together and passing response values from one step to the next automatically. You define the journey once. qAPI handles the data threading — extracting IDs, tokens, and values from each response and injecting them into the next call without manual scripting. For teams that have spent hours debugging “why is step 4 failing with a 404,” this alone removes a huge class of problems.

Chain Your Calls — And Actually Validate What Passes Between Them

Chaining API calls is step one. Validating what moves between them is step two — and most teams skip it entirely.

Here’s a common scenario: POST /orders returns a 201 with an order ID. That ID gets passed to PATCH /orders/{id}/confirm. The confirm call returns a 200. Test passes. But nobody checked whether the order ID that came back from step one was actually valid, or whether the status in the database actually changed, or whether the confirmation response contained the right fields to trigger the next downstream action.

You’re asserting “it didn’t crash.” You’re not asserting “it did the right thing.”

What to validate at each step in a chain:

- The response status is the right status — not just any 2xx

- The values being extracted and passed forward actually exist in the response (don’t assume the field name or structure is stable)

- The state of the system changed the way it should — sometimes this means a follow-up GET call to verify, not just trusting the response

- Error responses in the middle of a chain are caught and handled — not silently swallowed

This is where most hand-rolled test scripts fall down. Developers wire up the happy path, it works, the test stays green, and six months later someone adds a new field to the response schema, the extraction breaks, and suddenly POST /payments is receiving a null order ID and nobody knows why.

qAPI handles this with response mapping and inline assertions at each chain step. You can define exactly what fields to extract, validate that they meet expected conditions, and only pass them forward when they do. If an intermediate step returns something unexpected, the workflow fails immediately at that step — with the exact request, response, and assertion that broke — rather than three calls later with a confusing error.

You should test what’s actually happening in your system, not just whether your API is alive.

Use Realistic Data — Not the Same Three Test Fixtures

There’s a quiet epidemic in API testing: everyone uses the same test data. The same email address. The same user ID. The same product SKU. It works for the first test. It works for the second. By the time you have thirty tests all creating a user with test@example.com, they’re stepping on each other, failing intermittently, and you’re spending more time debugging test data conflicts than actual bugs.

Flaky tests — tests that randomly pass and fail without any code change — are the number one complaint in QA threads on Reddit and Quora. The root cause, more often than not, is shared or static test data.

Practical rules for workflow test data:

Each workflow run needs its own data. Generate unique values dynamically — timestamps, UUIDs, randomised strings. Don’t hard-code an email address that five parallel test runs will all try to register simultaneously.

Test realistic edge cases, not just clean inputs. Real users send special characters in name fields. They send very long strings. They upload files in unexpected formats. Workflows that handle “John Smith” flawlessly can silently choke on “François Müller” or a name with an apostrophe. If your workflow processes financial data, test the boundary — what happens at exactly $0.00, at the credit limit, at an amount with a long decimal?

Mirror what production actually looks like. The best test data comes from anonymised production traffic, not from what seemed reasonable when you wrote the test at 4pm on a Thursday.

qAPI can generate and inject dynamic test data at the workflow level — randomising values per run, parameterising inputs by environment, and pulling from data sets that reflect real-world usage patterns. This means parallel test runs don’t collide, and your edge case coverage reflects what real users actually do.

This is How You BuildWorkflows That Survive API Changes

APIs change. Fields get renamed. New required parameters appear. Response schemas get updated. Status codes shift. In a growing product, this happens constantly — and it’s the single biggest reason test suites decay.

Most teams deal with this reactively. The CI build goes red, someone investigates, finds that user_id is now userId, updates the test, marks it fixed. Multiply that across twenty endpoints and three sprints and you have a team that spends more time maintaining tests than writing new ones.

The smarter approach is to build your workflow tests so they’re as resilient as possible from the start — and to know immediately when something structurally changes, rather than finding out when a test breaks in the middle of a release.

How to build change-resilient workflow tests:

Use contract-based assertions rather than hardcoded values. Instead of asserting that the status field equals “active”, assert that the status field exists, is a string, and is one of the valid enum values. This survives a value change without breaking. Reserve exact-value assertions for things that should never change — like a specific error code for a specific violation.

Don’t assert on every field in the response. Assert on the fields that matter for the next step in the workflow. Asserting on everything means every schema addition becomes a test failure. Be specific about what you care about.

Separate workflow logic from environment config. Base URLs, auth tokens, and environment-specific IDs live in configuration, not in test files. When you deploy to a new environment, you change the config — not twenty tests.

qAPI is built around this exact problem. It monitors API contracts and flags when endpoint behaviour changes — new fields, renamed parameters, shifted status codes — so you know about the change before your tests fail. When a change does break a test, qAPI shows you exactly what changed, which tests are affected, and what needs updating. Instead of finding through a red CI build, you’re looking at a clear difference.

Key outcome you’d get from qAPI: Your workflow tests stay useful as your product evolves, instead of becoming the thing everyone dreads touching.

Run Workflow Tests in CI — But Run theRightTests at the Right Time

Wiring API tests into CI is table stakes in 2026. But most teams get the structure of this wrong — and end up with either a pipeline that takes 20 minutes to run on every commit, or a pipeline so thin it misses everything that matters.

The real question isn’t “should workflow tests be in CI?” It’s “which workflow tests, triggered by what, and how quickly do they need to fail?”

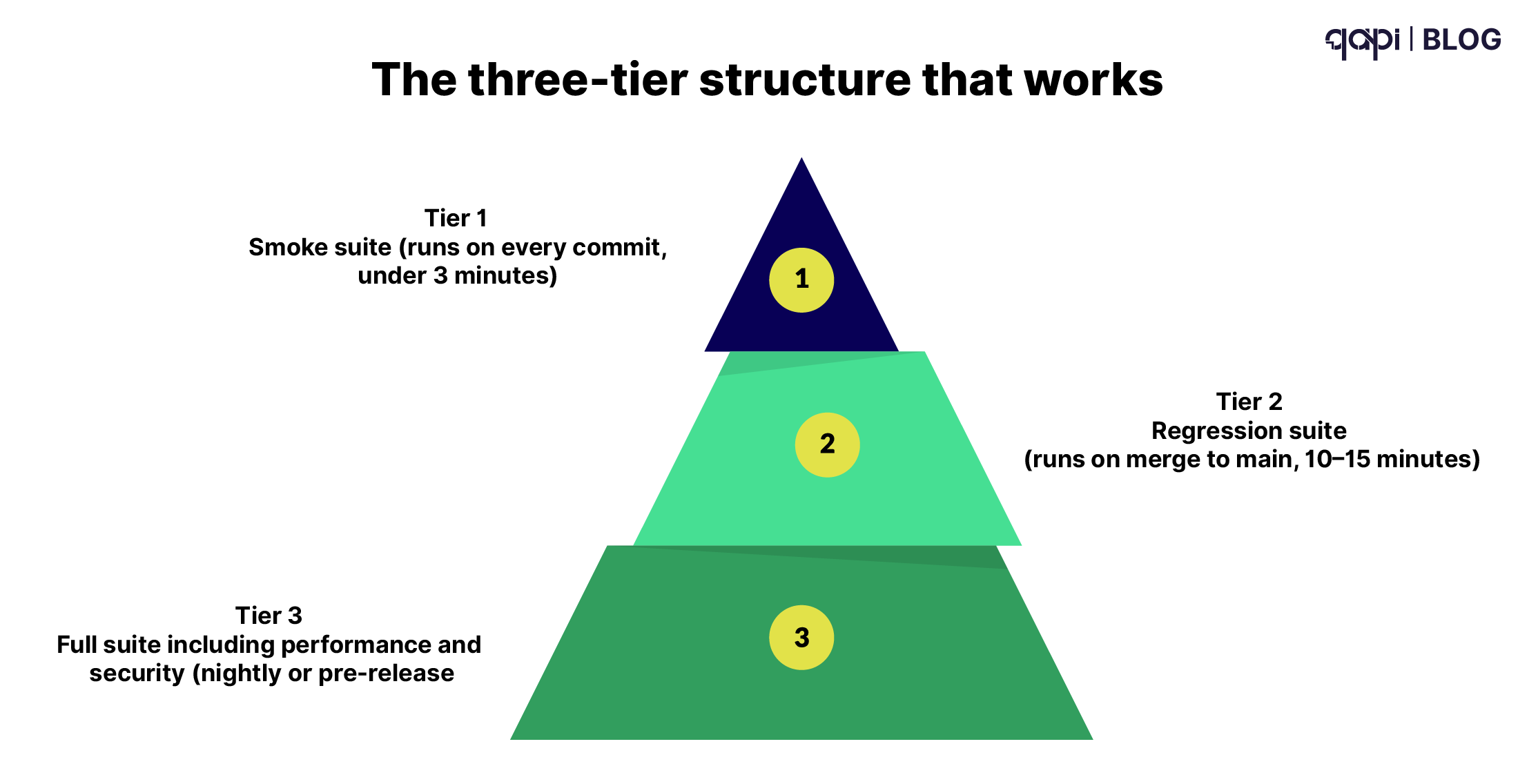

The three-tier structure that works:

Tier 1 — Smoke suite (runs on every commit, under 3 minutes): 4–6 critical workflow tests covering your most important business paths. Registration → login. Create → fetch. The absolute must-not-be-broken flows. If these fail, the PR doesn’t merge, period.

Tier 2 — Regression suite (runs on merge to main, 10–15 minutes): Full workflow coverage across all major user journeys. This is where you catch the subtler integration failures — the ones that don’t break core flows but do break edge cases. Runs nightly at minimum, on every merge to main ideally.

Tier 3 — Full suite including performance and security (nightly or pre-release): End-to-end workflow tests plus response time assertions, rate limit testing, and auth boundary checks. Takes longer, runs less frequently, but gives you the confidence to ship a release.

The other half of this is making failures actionable. A red CI build that produces a wall of log output is barely better than no CI. When a workflow test fails, the output needs to tell you: which step in the workflow failed, what the request looked like, what the response was, and what assertion didn’t hold. Everything else is noise.

qAPI integrates directly into GitHub Actions, GitLab CI, Jenkins, and similar pipelines. Tests run as part of your existing deployment workflow — no separate tool to log into, no separate dashboard to check. Failures surface in-line with the information you actually need to fix them: the exact step, the exact response, the exact assertion.

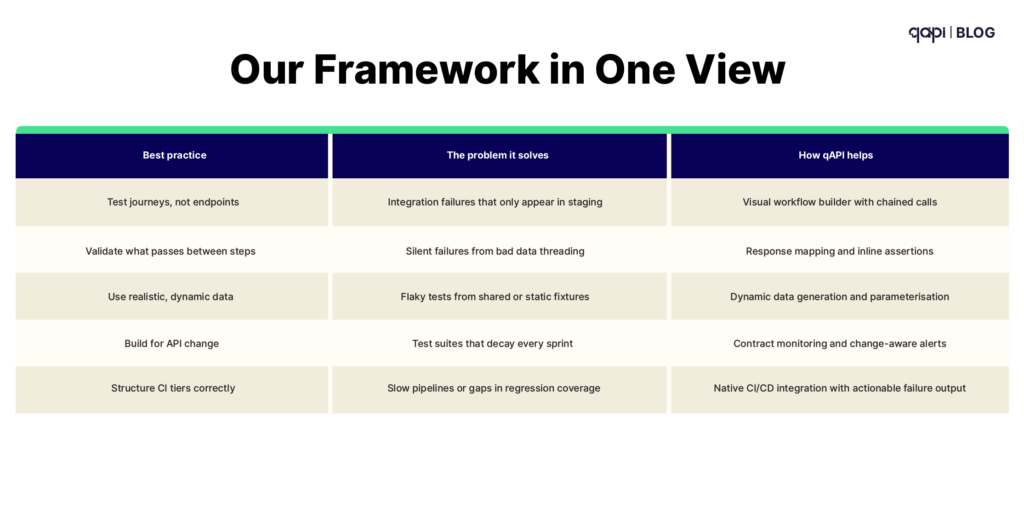

Our Framework in One View

| Best Practice | The Problem It Solves | How qAPI Helps |

|---|---|---|

| Test journeys, not endpoints | Integration failures that only appear in staging | Visual workflow builder with chained calls |

| Validate what passes between steps | Silent failures from bad data threading | Response mapping and inline assertions |

| Use realistic, dynamic data | Flaky tests from shared or static fixtures | Dynamic data generation and parameterisation |

| Build for API change | Test suites that decay every sprint | Contract monitoring and change-aware alerts |

| Structure CI tiers correctly | Slow pipelines or gaps in regression coverage | Native CI/CD integration with actionable failure output |

Frequently Asked Questions

API workflow testing is the practice of testing a sequence of API calls — as they actually occur in a business process — rather than testing each endpoint in isolation. It verifies that data passes correctly between calls, that the system's state changes the right way, and that the end-to-end flow works as expected.

End-to-end testing usually means testing through a UI — simulating a user clicking through the browser. API workflow testing tests the same journeys but at the API layer directly, without the browser. Many teams use both: API workflow tests for fast, reliable regression coverage, and UI E2E tests for final validation before release.

Focus on your most critical business flows first: the paths that, if broken, would immediately impact users or revenue. For most products that's 5–10 core journeys. Within each journey, you need at minimum a happy path, one or two failure scenarios (what happens when auth fails mid-flow, or a resource doesn't exist), and any known edge cases from past production incidents.

Extract them from the response at each step and inject them into the next call — don't hard-code them. Most testing tools support response variable extraction. In qAPI, this is built into the workflow builder: you point at the field in the response, give it a variable name, and reference it in subsequent steps.

Write schema-based assertions rather than exact-value assertions wherever possible. Assert that a field exists and has the right type, rather than that it equals a specific value. Keep environment-specific config (URLs, tokens, IDs) out of test files entirely. And set up contract monitoring — know about API changes as they happen, before they break your suite.

Yes. qAPI is built for both technical and non-technical testers. The workflow builder uses a visual, codeless interface — you add steps, connect them, map response values forward, and set assertions without writing code. For teams that want code-level control, qAPI supports that too.