The product is a hit but now you have new problems. How much traffic can the current APIs handle? How many APIs need changes? And how do you track it over time?

These questions aren’t easy to answer, but as a founder/product owner, a goal that everyone would like to find themselves in.

You’ve just reached your 3-year goal in a single year. Now it’s time to lock in and make decisions that will lay the foundation for the product’s future. Congrats — now your APIs are about to get absolutely hammered.

According to SQmagazine many companies now handle 50–500M API calls per month at an average. That’s ~19–193 requests/second peaks are often 5–15x higher.

But with this growth there are a few key areas that we should be on the lookout for, let’s look at this closely:

How Do You Scale API Traffic Without Breaking Performance or Blowing Up Cloud Costs?

As API traffic grows, most teams hit the same wall: at some point in time: systems that usually worked at a few hundred requests per second start slowing down, error rates increase.

This is a classic API scalability problem, but the issue isn’t related to volume; it’s that high-traffic APIs behave very differently under pressure than they do in normal conditions.

A big part of this comes down to how the API is scaled. Many teams start with Vertical Scaling—adding more CPU or memory to a single server. While this provides short-term relief, it has hard limits and gets expensive fast.

Horizontal scaling, on the other hand, allows you to add more instances as traffic grows by spreading the load across multiple machines..

But here’s the catch: horizontal scaling works best when APIs are stateless, This means any request can be handled by any instance without relying on local memory or session data.

Context: Stateless design is what makes an API truly scalable at high traffic levels.

Load balancing is the most effective way to manage costs. Instead of overloading one server, a load balancer distributes incoming API requests evenly across healthy instances. When traffic spikes, auto-scaling groups can spin up new instances automatically and remove them when demand drops.

This ensures your high-traffic APIs stay responsive without forcing you to pay for peak-capacity hardware all year long. Your goal as a product owner isn’t just to survive a single traffic spike; it’s to handle fluctuating traffic every single day.

The biggest mindset shift is designing for change, not just for peak numbers. Many teams size infrastructure for “maximum traffic” and hope it covers future growth. In reality, API traffic growth is uneven.

Flash sales, product launches, partner integrations, and viral campaigns create sudden bursts that basic setups can’t handle efficiently.

By building scalable APIs that expand automatically, your performance stays stable while API cost optimization.

How Should API Design and Governance Evolve as You Go from One Team to Many?

As teams grow from a single squad to multiple cross-functional groups, the challenge of scaling APIs shifts from infrastructure to design consistency and governance.

However, once infrastructure stops being the limiting factor, a different problem emerges: API design issues. When every team defines its own API patterns, surface conventions, error formats, and versioning strategies, the ecosystem becomes a mess.

This design gap slows down – integration, increases cognitive load for developers, and kills reusability. Research shows that without strong API governance, reusability can drop by more than 30% in large organizations.

To solve this, teams must scale along two dimensions: the system (to handle workload growth) and the design (to maintain consistency).

On the design side, teams must adopt API style guides that define URL structures, pagination schemes, error objects, naming consistency, pagination standards, authentication flows, and versioning rules.

These guides help you ensure in future that whether API X was built by Team A or Team B, it behaves predictably and integrates cleanly.

Design governance should also be followed by a dedicated group for review processes and contract-first validation. Rather than detecting breaking changes in staging or production, teams should validate API contracts early, ideally during CI runs. This prevents minor changes, like a renamed field or changed response order, from becoming major issue at scale.

Companies with formal API governance and contract validation report fewer integration failures and smoother scaling during peak traffic events, according to API industry reports.

Testing Your APIs: What is the Ideal Way?

We’ve looked at how to grow your APIs infrastructure, manage costs, and handle design and governance specifications. Once these are done, the only challenge that remains is testing them.

With API testing, you put your APIs through a series of tests to ensure they work as designed. To test the limitations, there are several types of tests, so mature teams don’t just check if the API works.

They will confirm if it is reliable, secure, and delivers on its business promise. This is how teams should plan to test their APIs once the design process begins.

1. Run Functional Tests

Functional tests ensure the API always matches the expected output.

| Focus Area | Example |

|---|---|

| Endpoint Behavior | If you ask for information (GET), you will get information. If you ask to change something (POST/PUT), you should confirm the change happened exactly as requested. |

| HTTP Method | Ensure measures are in place to validate that unauthorized POST requests are rejected with a 405 Method Not Allowed, and that PUT is used for full replacements rather than partial updates (which should be PATCH). |

| Expected Schema | Validate that the response structure for a successful transaction includes all required fields, as specified in the OpenAPI/Swagger documentation. |

2. Check Data Accuracy & Integrity

Let’s look at it closely, because wrong data spreads silently and is extremely hard to fix later. So when your API usage grows ensure to run data accuracy checks. Here are some examples that you can use as a reference:

| Focus Area | Example |

|---|---|

| Calculated Values | If an API calculates sales tax or discounts, test the logic against known financial benchmarks. For example, updating a customer’s address must reflect that exact change in the database immediately. |

| Persistence Verification | After a PUT /inventory/{sku} update, run a follow-up query on the database layer (or a separate read-only API) to confirm the write transaction committed the value correctly. |

| Data Type Fidelity | Validate that fields intended as a Big Decimal (e.g., currency) are not accidentally converted to floating-point numbers, which introduces rounding errors that are invisible at the surface level. |

3. Business Logic Validation

To build high-quality software, teams will have to go beyond to see if an API returns a response and focus on enforcing real business logic through API testing. Business logic refers to the rules and workflows that reflect how a real application should behave in real use cases.

When business logic fails, entire processes—from order handling to payments—can break your product, even if the API itself technically “works.”

| Focus Area | Example |

|---|---|

| Workflow | In a typical order lifecycle, confirming that an order status cannot transition directly from PENDING to SHIPPED without first passing through PROCESSING. |

| Eligibility Checks | If a customer is tagged as “Bronze”, the API should automatically reject any request for “Platinum-only” features, even if the request is technically valid in every other way. |

| Rate Limiting & Limits | If an API allows 10 withdrawals -per minute—the first 10 go through successfully, but the 11th request must be blocked with a 429 Too Many Requests error. |

Another key measure is integrating automated testing into the development workflow. API automation enables teams to run these logic-focused tests every time code changes are made, giving fast, reliable feedback without adding manual burden.

Automated API tests run in seconds compared with much slower UI tests, sometimes up to 35× faster, enabling more frequent checks and broader coverage across business rules and edge conditions.

This drastically improves confidence in releases because of the logic paths that matter most—such as eligibility checks for premium features or rate limiting thresholds—are deployed at scale with less to no human intervention.

Teams should also treat API testing as an important flagging metric, and start to define it’s own guardrails to ensure product stability and increase customer retention. Because APIs operate independently from user interfaces, it is a good practice to test API logic before the UI is even built, allowing logic issues to be caught early when they are cheaper and easier to fix.

Early testing of business logic through automated testing tools integrated into CI/CD pipelines ensures that every change reinforces—or at least does not break—the expected real-world behavior.

Finally, “teams should measure and evolve their API testing strategy”

Because at the end of the day, enforcing business logic in API testing is not optional—it’s essential to sustainable software quality and fast delivery cycles, and robust automated testing practices are the most effective ways to achieve stability at scale.

4.Performance & Reliability

| Focus Area | Example |

|---|---|

| Latency Consistency | Measure the 95th and 99th percentile response times, not just the average to check the average consistency. |

| Stress Testing & Saturation | Put load that exceeds documented throughput (e.g., sending 150% of expected peak traffic) to confirm the API returns 503 Service Unavailable, rather than corrupting data or crashing. |

How Do You Test APIs With Incomplete Documentation?

In short: You don’t—at least not by following the docs.

Outdated or missing docs forces teams to guess behavior, hunt for old specs or re-learn things the system already knows.

Instead, teams should observe how the API actually behaves (we’ve already talked about this above) by sending real requests, inspecting real responses, and treating runtime behavior as a reference point.

With qAPI’s AI summarizer, you get a complete AI -assistance that makes it easier for you to populate documentation end-to-end and understand what the API is designed to do.

How Do You Test for API Chaining?

You test them as one continuous flow, not as separate calls, because that’s how real users experience the system. In most applications, one API depends on data from another, so a single failure can break the entire journey.

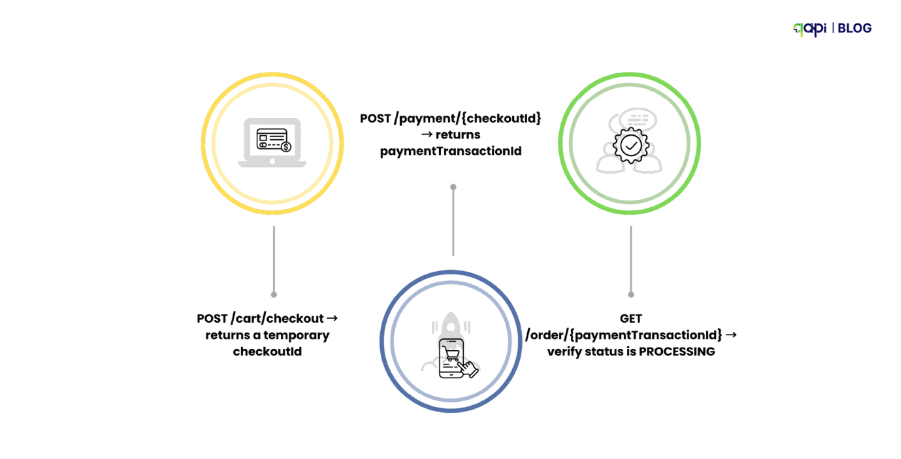

Example (E-commerce Checkout):

- POST /cart/checkout → returns a temporary checkoutId

- POST /payment/{checkoutId} → returns paymentTransactionId

- GET /order/{paymentTransactionId} → verify status is PROCESSING

The most critical test here is what happens when something fails. If the payment is declined in step 2, the system should clean up properly—mark the cart as abandoned or roll back the checkout—rather than leaving the order in an incomplete state.

This matters a lot because broken workflows cause data inconsistencies, failed orders, and customer frustration – something businesses ignores when growth becomes too big to handle.

Wrapping up

Growing teams often assume API scaling is a future problem—something to solve once traffic explodes or systems slow down.

Just like Google search has shifted from “pages” to “answers,” new systems have shifted from UI-driven flows to API-driven architectures. If you’re not testing APIs with scale in mind early on, you’re already digging your own grave.

Mature teams don’t wait for failures to tell them their APIs don’t scale. They instrument, test, and observe continuously. This level of ownership turns API testing from a defensive task into a strategic advantage: it tells teams where they will break next, not just where they broke last time.

Your approach to scaling APIs depends on what you want to protect.

If it’s reliability, you focus on load, rate limits, and graceful failure. If it’s velocity, you invest in automated testing that runs on every change and across every dependent service. If it’s cost and performance, you measure real request patterns instead of assumptions.

It is simple if you state out what you want.

We’re in a similar messy middle with APIs as we are with AI-driven search: patterns are changing faster than best practices can keep up.

Teams that start treating API testing as a first-class scaling strategy today will have a massive advantage tomorrow.— When the growth hits, you won’t be guessing. You’ll already know.