If your organization has more than a handful of services, you’ve probably seen this movie:

A field name changes from customerId to clientId.

• Service A’s local tests pass

• CI pipelines stay green.

• Deployments proceed normally

Then, days later:

• Service B’s integration layer starts failing.

• Error rates start to climb

• Customer-facing systems degrade

• Incident response begins

The issue wasn’t broken code. It was a broken contract.

This is one of the most common reliability failures in that we see in microservices architecture, and it exposes a critical weakness in how many teams still approach integration testing.

It’s because unit tests are too local to see cross‑service impact. In 2026, you need something in the middle that can keep up with microservices, third‑party APIs, and AI‑generated changes.

But contract testing today is no longer limited to API validation strategy. In practice, it has turned into a basic reliability mechanism for teams managing independently deployed services, external integrations like Stripe or Twilio. And increasingly, AI-generated code changes that can introduce regressions faster than traditional QA processes can document them.

For organizations adopting platforms including qAPI or using agentic testing systems, contract testing becomes even more powerful by automating large portions of validation and change detection.

Treat Contracts as “APIs for Your APIs”

Most teams treat OpenAPI specs as documentation. Contract testing treats them as executable promises. If a contract says:

“If you call GET /orders/{id} with X, I promise to respond with Y status codes and a body that at least has id, status, and totalAmount shaped like this…”

If we’re being precise:

• The provider promises:

- These HTTP methods and paths exist.

- For these inputs, you’ll get these outputs (status, headers, shape).

• The consumer promises:

- “I will only rely on these parts of the response, in these ways.”

Contract testing verifies both sides so that consumers don’t depend on things that were never promised. And providers don’t silently break what consumers rely on.

In practice, this will give you two big things:

- You can move faster because you can see whether a change is safe before deploying.

- You reduce the need for brittle, full‑stack “everything talking to everything” tests.

Why Integration Testing Alone Isn’t Enough Anymore

Let’s take a realistic example:

• You’ve got 50+ microservices.

• Some are owned by different teams; some are legacy; some are AI‑driven.

• You also rely on external APIs (payments, KYC, AI, messaging).

To “fully” test this with classic integration tests, you will need:

• All services online and running.

• Realistic seed data.

• Stable test data in third‑party sandboxes.

• Flows that manage 5–10 services in one go.

To fully test this architecture with classical integration testing, you would need all services running across potentially different stacks, realistic seed data which reflects production behavior, stable test data in third-party sandboxes, and end-to-end flows traversing five to ten services in a single test case.

You might manage a few critical scenarios this way, but you cannot cover every consumer variant across 50 services, every minor field change, or every failure mode and edge case without enormous infrastructure cost and maintenance cost.

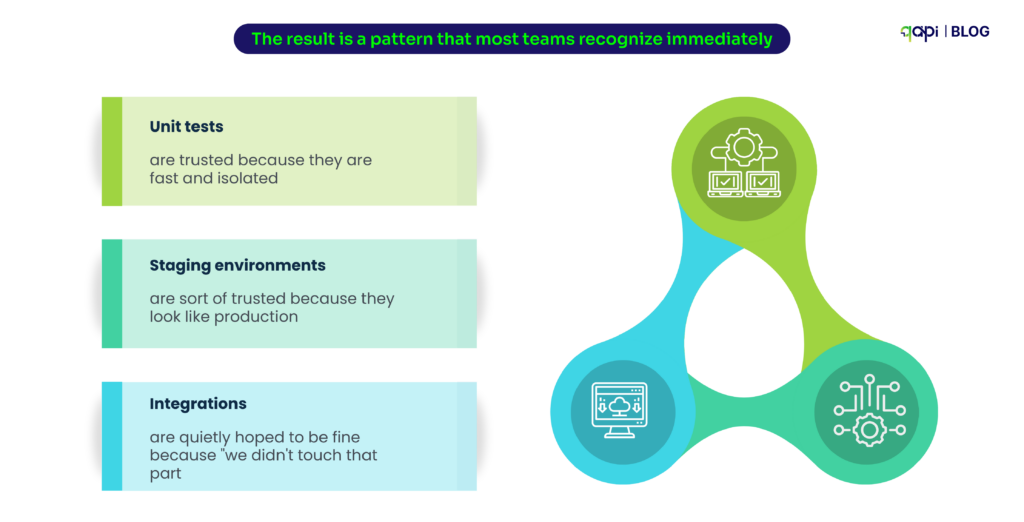

The result is a pattern that most teams recognize immediately:

• Unit tests are trusted because they are fast and isolated

• Staging environments are sort of trusted because they look like production

• Integrations are quietly hoped to be fine because “we didn’t touch that part”

This is how subtle contract breaks survive all the way to production, the point we’re trying to expose.

Microservices contract testing is about shortening that feedback loop and making service-to-service integrations first-class test targets. And not in a way that side effects are discovered during a three-hour end-to-end run.

Consumer‑Driven Contracts Is The Only Thing That Scales

At small scale, a provider-driven approach will feel reasonable. Because the provider publishes an OpenAPI spec, consumers read it, everyone adapts. At 30 to 50 services, this model will fail and experience problems.

Why? Because each consumer:

• Uses a subset of fields.

• Cares about specific edge cases.

• Has its own tolerance for a change.

This is how consumer‑driven contracts work in practice. Let’s imagine an Orders API consumed by:

• Web frontend.

• Mobile app.

• Billing service.

• Analytics pipeline.

Each consumer writes tests that encode:

• The request they sent.

• The reply they expect: specific fields, formats, and rules.

For example, the billing service writes:

• When I call GET /orders/{id} as a system user, I expect:

- Status 200.

- currency present and an ISO 4217 code.

- totalAmount as a number, not string.

- status {PAID, REFUNDED}.

When those consumer tests pass, the generated contracts are published to the broker. The Orders API team then pulls all consumer contracts and runs a provider contract verification suite that replays every consumer expectation against the actual API. If a developer ships a change that drops currency or silently renames totalAmount, verification fails before deployment reaches any shared environment.

Now scale that across dozens of services: the provider can see, in one place, exactly what each consumer relies on, and whether a change is safe.

What We Don’t Talk About

If contract testing for microservices were as simple as adding a library and running tests, adoption would be universal. But in reality, the implementation problem is quite real and worth naming directly.

Contracts die when no one owns them. Without clear ownership, contracts will move away from actual behavior, they will multiply into hundreds of tiny interactions that nobody understands, and gradually encode internal implementation details that change frequently.

Keeping contracts aligned with real traffic requires deliberate tooling and process.

CI/CD integration adds pipeline complexity. The basic flow sounds clean on paper — consumers run tests, publish contracts, providers verify against them, pipelines stay green. In practice, getting this to work reliably across multiple teams and repositories takes real effort. Version compatibility alone can become a rabbit hole.

And when things go wrong, pipeline failures often feel random rather than useful. That is usually the fallback moment when teams quietly start skipping the whole approach and go back.

Third-party and AI API testing presents a different challenge entirely. If you do not control when a payment vendor deprecates a field or when an AI inference API begins returning slightly different response shapes. You cannot spin up their provider locally for standard verification workflows. Classical consumer-driven patterns do not map cleanly to external dependencies — and yet these are precisely the integrations where behavioral drift is most dangerous and least visible.

These are the exact stages where a contract break can take down a checkout flow or silently corrupt your downstream data. And yet they are the ones most teams leave unguarded because the tooling does not fit as you or your team wanted.

The good news is that all three of these problems are solvable with the right process and platform support. The next section covers how to build a setup that holds up under real conditions — not just in a demo.

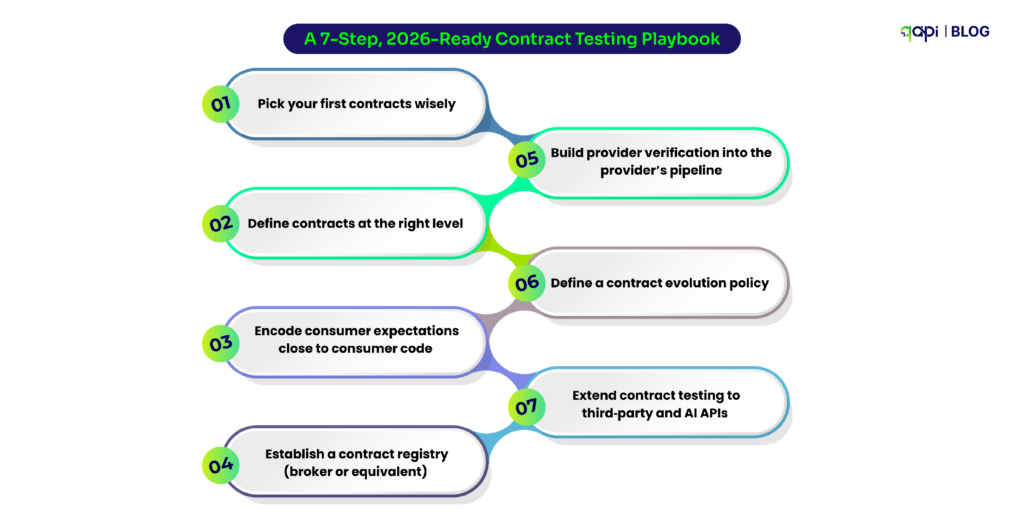

A 7‑Step, 2026‑Ready Contract Testing Playbook

Less talking about the problems. Now we’ll help you build a more realistic flow you can implement in your stack, and see how qAPI can make your life easier.

Step 1: Pick your first contracts wisely

You don’t have to start with every API. Start with:

• High‑blast‑radius services (auth, payments, orders, onboarding).

• Painful integrations (recent incidents, frequent changes).

• Third‑party dependencies that are business‑critical for your process.

So define a goal like:

“We want to ensure payments, orders, and ledger services can change without silently breaking each other.”

Step 2: Define contracts at the right level

For each integration:

• Identify business‑level interactions, not low‑level HTTP noise.

• For example, instead of 20 tiny contracts for GET /orders, define 3–5 real scenarios:

- Fetching a paid order for billing.

- Fetching a pending order for UI.

- Fetching a refunded order for analytics.

Each scenario:

• Includes the minimal set of fields that consumer actually uses.

• Includes constraints that really matter (types, non‑null fields, enums).

• Avoids over‑specifying internal fields that might change often.

Intelligent API testing platforms can accelerate this step considerably by analyzing real traffic and inferring which fields each consumer actually relies on, rather than requiring teams to guess from documentation.

Step 3: Encode consumer expectations close to consumer code

For each consumer you must:

• Add a contract testing suite in the same repo as the consumer.

• Use language‑appropriate libs (Pact etc.) or your own test harness.

• Test against a mock/simulated provider—not the actual API.

The key is: consumer tests become living documentation of how they use the provider. They should run on every PR for that consumer.

With qAPI, an agent can:

• Observe which calls the consumer actually makes.

• Propose/update those contract tests when new patterns emerge.

• Flag when consumer code starts relying on a previously unused field.

Step 4: Establish a contract registry (broker or equivalent)

Contracts are useless if they live only in a single repo.

You need:

• A central place where contracts are published and versioned.

• Metadata: which consumer, which version, which environment.

• A way for providers to query “what do my consumers expect today?”

This can be a dedicated broker or part of your platform tooling. The principle matters more than the brand.

qAPI’s advantage is that it can help you test for all traffic across your APIs (when integrated), so in many cases it can act as an implicit “contract registry”:

• It knows what endpoints exist.

• It knows which consumers call them and how.

• It can detect drift between what’s documented and what’s happening.

Step 5: Build provider verification into the provider’s pipeline

For each provider try to add a step in CI pipeline that:

• Finds all relevant contracts from the registry.

• Stands up the provider (locally or in an ephemeral environment).

• Replays contract requests and asserts responses match expectations.

If verification fails, the provider pipeline fails.

This is where friction appears in traditional setups:

• Spinning services up is slow.

• Data setup is tricky.

• People get blocked by “false positives” (ambiguous expectations).

With qAPI:

• You can often verify against a known staging environment where qAPI already runs tests.

• qAPI’s agentic layer can help you classify failures:

This is a real contract break or data/environment issue or a change where contract and consumer both need an update.

Step 6: Define a contract evolution policy

Contracts will change. The question is whether you do it intentionally.

Let’s make it simple by adding rules like:

• Non‑breaking changes:

- Adding new optional fields and new endpoints with new versions is OK.

• Breaking changes:

- Removing fields, changing types, or altering semantics requires:

- New API version, or Coordinated contract updates and consumer releases.

You also need a deprecation flow:

• Mark contracts as deprecated in the registry.

• Warn consumers when they rely on behavior that will soon be removed.

• Enforce removal after a grace period.

Note: Deprecation flow is a planned process that is widely used in software development to remove any old features, libraries or even APIs with a provision to maintain backward compatibility at all times.

Because qAPI continuously monitors usage, it can:

• Tell you whether a field marked “deprecated” is still being used by any consumer.

• Identify “dead” behavior that no one calls anymore but still exists.

Step 7: Extend contract testing to third‑party and AI APIs

If you’re using Stripe or OpenAI you can’t publish contracts, but you can:

• Code your expectations for their APIs as contracts.

• Periodically validate them against sandboxes or canary test calls.

• Alert when behavior drifts (e.g., new fields, changed error formats).

For APIs:

• You usually can’t assert exact text. But you can assert shape:

- Top‑level keys exist (choices, usage, etc.).

- Certain fields are always present and correctly typed.

- Error payloads follow a known structure.

qAPI’s testing process is particularly useful here:

• It can spot when a third‑party response shape has changed.

• It can also detect if the endpoint’s behavior is now different from last week across your stack, not just in one test.

What “Strong” Contract Testing Looks Like in 2026

A mature contract testing practice doesn’t mean “We have Pact in one repo.”

It looks more like:

• Every critical integration has clearly defined contracts owned by both sides.

• Consumer expectations are written as tests and run on every PR.

• Providers verify against all known consumer contracts before deployment.

• Contracts, specs, and actual traffic stay in sync—because an intelligent system is watching.

• Third‑party and AI integrations have encoded expectations and drift detection.

• Breaking changes are rare, planned, and communicated.

qAPI doesn’t replace contract tools outright—it orchestrates and amplifies them:

• Uses traffic + specs to infer and update contracts.

• Reduces manual maintenance by generating and adapting tests.

• Watches for behavioral drift between provider, consumers, and docs.

• Runs contract and functional tests as a unified, agentic layer in your pipelines.

If You Want to Start This Month

If this all sounds great but large, here’s a realistic 30‑day plan that any lean team can implement:

Week 1

• Pick 1–2 high‑risk integrations (e.g., payments ↔ orders ↔ ledger).

• Document 3–5 key interactions each as contracts (even if only prose initially).

Week 2

• Add consumer tests for these interactions in both directions (frontend/service side).

• Run them locally and in consumer CI.

Week 3

• Create a simple contract registry (could be Git + naming convention to start).

• Add a provider‑side verification job for one service.

Week 4

• Integrate qAPI or a similar intelligent platform, if available, to:

- Observe real traffic and validate your contracts are realistic.

- Highlight differences between what you think happens and what actually happens.

- Start surfacing contract drift warnings in CI.

Once that first integration is stable and giving you signal, then scale to others.

Contract testing isn’t about worshipping specs; it’s about preventing your services from surprising each other. In a world where microservices, third‑party APIs, and AI‑generated code change fast, you need a way to encode expectations, verify them automatically, and spot changes early.

If your team is already investing in API testing with something like qAPI, contract testing is the natural next layer: it takes you from “our endpoints respond” to “our services evolve without breaking the people who rely on them.”