Microservices and APIs are now everywhere, along with CI/CD, “automation” driven dashboards. These terms sound great —they feel like the logical next step—; there is a good chance your team is already planning or launching them. In fact, your team has likely made some ambitious plans to integrate and scale the existing development systems.

Poetically by using these terms and strategies your teams should be shipping confidently, but in reality, releases are still delayed, oncall rotations are messy, and production incidents keep slipping through. Something is definitely wrong here, and you are not able to locate it, and what’s worse than not knowing what the problem is with your APIs. So, if your API development cycles looks confusing, it’s important to understand how it works in practice and how to simplify and make it work.

Recent industry reports highlight a growing gap between intent and execution:

• Flaky automated tests are on the rise as suites and pipelines grow more complex.

• 99% of organizations reported at least one API security issue in the past 12 months

• API incidents are now the leading root cause of major outages across industries.

So, the problem isn’t “we don’t test APIs.” The problem is that most teams are:

• Maintaining scripts that are effortless and that can’t keep up with change.

• Testing the wrong things (lack of clarity of API functionality and purpose).

• Doing far too much manually in a world that moves too fast.

To fix it, you have to start by naming what’s actually going wrong.

The Challenges That Quietly Break API Testing

Here is a pattern that we’re seeing repeats across nearly every mid-to-large engineering organization:

Once the team upgrades their API testing tool. The new version ships self-healing tests, built-in security scanning, automated contract validation, and real-time schema drift detection. The changelog is impressive.

And then the team uses it… exactly the way they used their legacy tools.

Same manually written collections. Same hardcoded tokens and URLs. Same “happy-path-only” assertions. Same nightly batch runs instead of per-commit feedback. The tools have evolved, but the underlying practices haven’t matched the pace.

This gap manifests in three specific, measurable ways:

• Test Maintenance Overload: In teams with brittle, heavily scripted suites, maintenance still consumes 40–60% of total automation time. Modern tooling offers contract-driven test generation that can slash this number—but only if teams restructure their suites to actually support it.

• Shallow CI/CD Integration: Many teams still run Postman collections locally before a deploy or rely on a single nightly run. While modern tools support deep, per-commit pipeline integration, the internal workflows often remain stuck in a manual mindset.

• Wasted Self-Healing Capabilities: When a response schema changes—a renamed property or a shifted data type—modern tools can auto-apply updates. However, teams that still hardcode every assertion by hand never trigger these capabilities, forcing them to fix every break manually.

Eventually, coverage stops growing. This isn’t because the team lacks ambition; it’s because every engineering resource is exhausted just keeping existing tests alive. To protect pipeline velocity, teams start disabling “noisy” tests. Coverage quietly erodes in the most critical areas: error handling, authentication, and performance.

Meanwhile, the few teams that have modernized their practices alongside their tools report faster releases, fewer regressions, and significantly less time spent on test maintenance.

The gap isn’t about which tool you pick. It’s about whether your testing practice has caught up to what the tool can actually do.

Test Maintenance Overload

Every contract change—new field, new auth scheme, slightly different response—can break dozens or hundreds of tests if they’re heavily scripted and hardcoded. Studies of automation practices note that maintenance can consume 40–60% of test automation time in large suites when design is brittle.

That leads to two predictable outcomes:

• Coverage stops growing because teams are just keeping old tests alive.

• People start disabling “noisy” tests to protect the pipeline, shrinking coverage quietly.

AI is in the Workflow—But Teams Aren’t Ready

This is the widest gap in API testing right now — and it is growing fast.

In 2026, AI-assisted test generation, anomaly detection, and MCP-powered local model integrations aren’t experimental but strategic. They ship inside tools. They power workflows at companies that are moving faster, catching deeper issues, and releasing with a fraction of the manual overhead that legacy teams still carry.

But most teams haven’t absorbed this shift. Here is what that looks like in practice:

• Test creation is still entirely manual. A developer or QA engineer reads the spec (if it exists), writes assertions by hand, and updates them by hand when something changes. Every. Single. Time.

• Flaky test diagnosis is still a human guessing game. Instead of ML-based classification that identifies patterns in test instability — timing dependencies, shared state, environment drift — teams assign someone to “look into it” during a sprint where nobody has slack.

• Coverage gaps stay invisible. Without AI analyzing traffic patterns, schema evolution, or historical incident data, teams have no systematic way to know what they’re not testing.

The dangerous gaps — around error handling, authorization edge cases, timeout behavior — stay hidden until they show up in production.

Research into ML-based flaky test classification shows promising results in identifying problematic tests automatically. But in practice, most teams don’t benefit from this intelligence yet — not because it doesn’t exist, but because their tooling and workflows haven’t been updated to use it.

Teams that still rely entirely on manual test design are not just slower. They are structurally unable to keep pace with API-first competitors who use AI to auto-generate edge-case coverage, self-heal broken tests after contract changes, and surface risk patterns humans would miss.

Distributed Microservices: Failure Points Everywhere

This is the one problem that architects understand in theory but testing teams experience in pain.

Microservices delivered on their core promise: teams can develop, deploy, and scale services independently. But that autonomy added a category of failure that traditional testing frameworks were never designed to catch.

Most failures in distributed API systems don’t happen inside a service. They happen at the boundaries where services interact.

Let’s see what this means:

The Boundary Problem

Consider a simple example. Service A changes a response field — maybe a field name, maybe a format.

The change seems harmless. Service A’s tests pass. Service B’s tests also pass because nothing in its local environment changed.

But in actual practice, when Service B consumes the updated response, the system breaks. This is contract drift.

Both teams did their testing correctly — but no one tested the interaction.

Failures Don’t Stay Local Anymore, why?

Distributed systems also fail in chains. Leading to failures appearing across multiple services. This happens because no single team sees the full picture. No single test suite reproduces the issue.

This is what makes cascading failures so difficult to catch before production.

Scale Makes Testing Fragmented

Large organizations now operate hundreds or thousands of APIs across many teams.

Without any strong governance, testing becomes fragmented because:

• Teams invent their own testing practices

• Duplicate APIs and duplicate tests emerge

• Breaking changes ripple across services without clear ownership

Over time, the system becomes harder to reason about and harder to test reliably.

The hardest problems appear when testing real workflows. Business processes like:

• Loan origination

• Claims processing

• Order fulfillment

Rarely involve a single API.

Instead, they spread in multiple services interacting in sequence.

Testing these flows requires:

• Orchestrating chains of API calls

• Maintaining state between steps

• Coordinating with external systems

These stateful, multi-service workflows remain one of the hardest areas of API testing.

Endpoint Coverage Is Still Misleading Metric

Many teams still measure success by endpoint coverage. If every API endpoint has tests, the system should be stable — in theory.

But in 2026 failures don’t happen inside endpoints. They happen between services.

Testing APIs in isolation may improve coverage metrics, but it does quite little to guarantee system reliability in production.

Test Data Complexity Amplifies the Problem

Even well-designed tests become unreliable when test data is poorly managed. Shared databases, reused identifiers, and hidden dependencies between tests often lead to the classic scenario:

A test passes when run alone but fails when the entire suite runs.

What we feel is that API testing isn’t failing because teams aren’t writing tests.

It feels broken because new architectures are distributed, while many testing approaches were designed for monolithic systems.

Testing individual APIs is easy. Testing how hundreds of APIs behave together — under real conditions — is where the real challenge begins.

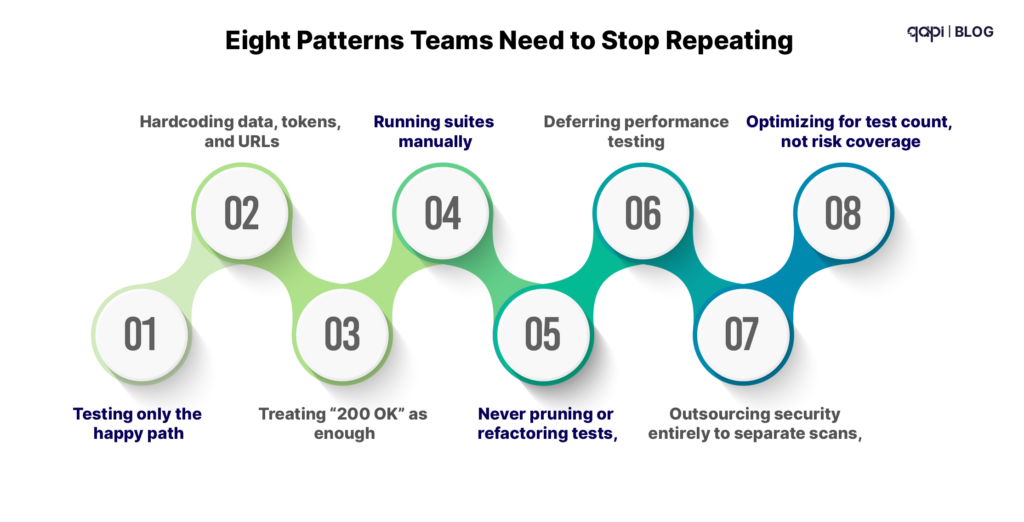

Eight Patterns Teams Need to Stop Repeating

On top of those structural challenges, certain habits make everything worse:

- Testing only the happy path while most incidents come from edge cases and failures.

- Hardcoding data, tokens, and URLs so suites are brittle and environment specific.

- Treating “200 OK” as enough, instead of validating schemas, business rules, and error behavior.

- Running suites manually instead of integrating them as first class citizens in CI/CD.

- Never pruning or refactoring tests, letting suites rot into noisy, low signal collections.

- Deferring performance testing until right before launch—or never.

- Outsourcing security entirely to separate scans, instead of embedding negative and abuse case tests into normal design.

- Optimizing for test count, not risk coverage, chasing big numbers instead of meaningful protection.

Recognize any of those? Most teams do.

The Questions High Performing Teams Have Started Asking

The pivot from “more tests” to “better testing” often starts with new questions:

- Which 10 APIs, if they fail, hurt us the most?

- For those APIs, are we testing error handling, security, and performance—or just “does the happy path return 200”?

- What percentage of our test failures in the last month were flaky vs. real issues?

- How many of our external APIs have at least basic auth and input validation tests, given that almost all organizations have experienced API security incidents?

- How much time did we spend maintaining tests last quarter versus expanding coverage?

- Do our tests adapt when contracts change, or are we rewriting scripts by hand each time?

If you don’t like your answers today, you’re not alone. But that’s also where a new approach becomes compelling.

What “Good” Looks Like—and Where qAPI Fits

A modern API testing practice isn’t about perfection. It’s about:

• Change aware tests driven by contracts (OpenAPI, consumer driven contracts) that flag breaking changes early.

• Risk-aligned coverage, where business-critical APIs and failure modes (security, performance, correctness) get disproportionate attention.

• CI/CD native automation, with fast, reliable feedback on every meaningful change.

• Built in functional, process and performance testing not just as separate, but all in one.

• Intelligent, agentic behavior that reduces maintenance and flakiness instead of amplifying them.

This is exactly the gap qAPI is designed to fill.

Instead of another brittle, script heavy framework, qAPI uses an agentic, AI infused approach to:

• Detect API changes and highlight what tests are now at risk.

• Reduce manual maintenance through reusing test cases where possible.

• Help teams focus on meaningful coverage—especially around orchestrated flows, security, and performance—rather than chasing raw test counts.

• Integrate deeply with modern pipelines so API tests become a reliable, fast feedback mechanism, not a lastminute hurdle.

If your current reality looks like constant flakiness, endless maintenance, and a growing sense that “we’re still blind in the riskiest places,” it’s a strong signal that your API testing strategy needs to evolve.

Want to See What Agentic API Testing Looks Like?

If you recognized yourself in more than a handful of the challenges or mistakes above, you’re exactly the kind of team qAPI was built for.

Here are three low friction next steps:

- Run a quick API testing health check Take one of your most critical APIs, list the top 5 failure modes that would hurt you, and check how many you actually test today.

- Shortlist one or two painful workflows Think of a flaky, business critical flow—like payments, onboarding, or loan approval—and imagine what it would mean to have tests that adapt as that workflow evolves.

- See qAPI in action on your own APIs Instead of reading another generic best practices guide, bring one real use case and see how an agentic, change aware approach can cut flakiness, shrink maintenance, and expand meaningful coverage—without throwing more people at the problem.

You don’t have to boil the ocean to fix API testing. But you do need tools and practices that match the complexity you’re actually operating in.

If you’re ready to move beyond fragile scripts and slow feedback into intelligent, agentic API testing, qAPI is a good place to start.